In new paper, experts working at the intersection of robotics, machine learning, and physics-based simulation share how computer simulation could accelerate the development of smart robots that might interact with humans…

Jeffrey Trinkle has always had a keen interest in robot hands. And, though it may be a long way off, Trinkle, who has studied robotics for more than 30 years, says he’s most compelled by the prospect of robots performing “dexterous manipulation” at the level of a human “or beyond”.

“I’ve always felt that for robots to be really useful they have to pick stuff up, they have to be able to manipulate it and put things together and fix things, to help you off the floor and all that,” he says, adding: “It takes so many technical areas together to look at a problem like that that a lot of people just don’t bother with it.”

But Trinkle, a faculty member and chair of the Computer Science and Engineering Department at Lehigh University, a private research university in Bethlehem, Pennsylvania, USA, does more than bother with it. He and his team are working on projects that involve just such technical challenges.

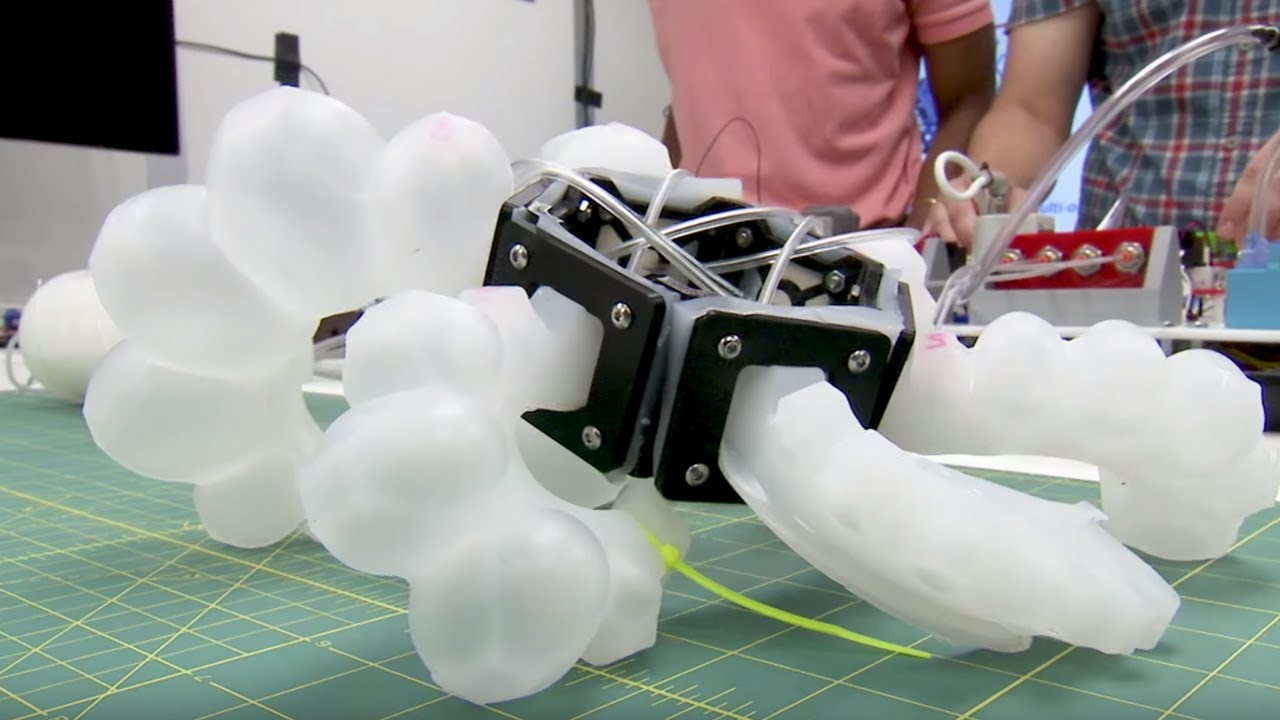

One such project is a collaboration to develop a new approach to the design and construction of soft robots – the future of human-machine collaboration, says Trinkle – inspired by the movement of natural muscles in soft animal structures. Think: giraffe tongues, octopus tentacles and elephant trunks. Computer simulation is key to developing this approach.

Trinkle, along with colleagues at other institutions, has co-authored a ‘perspective’ paper called ‘On the use of simulation in robotics: ‘Opportunities, challenges, and suggestions for moving forward’ that appears in the latest issue of the Proceedings of the National Academy of Sciences arguing that “…well-validated computer simulation can provide a virtual proving ground that, in many cases, is instrumental in better understanding safely, faster, at lower costs, and more thoroughly how the robots of the future should be designed and controlled for safe operation and improved performance.”

“Against this backdrop,” continue the authors, “we discuss how simulation can help in robotics, barriers that currently prevent its broad adoption, and potential steps that can eliminate some of these barriers.”

The article summarises the points of view expressed during a 2018 National Science Foundation/US Department of Defence/National Institute for Standards and Technology workshop dedicated to the topic. The meeting brought together participants from a range of organisations, disciplines, and fields. The expertise represented comes from an intersection of robotics, machine learning, and physics-based simulation, say the authors.

“The pressing question is how to jump start a robotics-simulation cross-pollination process that would speedily transition the effort from the academic debate’ phase into a simulation-enabled building and demonstration of technology’ phase. This transition can be catalysed by a sustained decade-long financial commitment, which would ensure funding for cross-disciplinary efforts that promote collaboration, competition, and compilation of open repositories of validated models and source code.

“As witnessed in the aerospace and automotive industries, the paradigm shift to digital, while manifestly impactful, took decades to coalesce. Learning from this experience, the hope is that simulation will lead to breakthroughs in the design of smart robots in a matter of years rather than decades.”

Like a baby learning to crawl

Trinkle’s soft robots project is a collaboration with Yale University, the University of Washington and Brown University, funded by a National Science Foundation Emerging Frontiers in Research and Innovation grant. His role is to use mathematical models, along with computer science techniques, such as search algorithms, to develop the computer system that ‘tells’ the robot how to move.

He offers the Roomba, the robotic vacuum that moves across the floor autonomously, as an accessible example. “Roombas use the same kind of technologies we are using, just in a very simplified setting,” says Trinkle. “Roombas know that there’s a wall. They have an internal map that ensures they can move around without bumping into things too much.”

Trinkle says this map functions similarly to the human brain. “Your brain sends signals to your muscles and they change their length, contracting or expanding, depending on what you’re trying to execute,” he says. “We are doing something similar here. So imagine taking hundreds or thousands of modular units and using them to build your own elephant trunk.”

To do this, the researchers are closely examining the biology of a number of soft animal structures to better understand how muscle cells work in concert with tendons and other tissue to articulate movements. Trinkle and his students apply this biological data to construct a computer simulation of, in one example, an elephant trunk. The structure’s components and their connections to each other are represented with crisscrossing lines. Red lines stand in for active muscle cells and grey for fat and connective tissue, for example.

Using a simulation tool, Trinkle applies mathematical models to instruct the simulated appendage to curl around a simulated object such as a circle, representing what would be a disk in three-dimensions.

To design the ‘brain’ or ‘map’ that will ultimately instruct a three-dimensional robot how to move, Trinkle uses techniques employed in building artificial neural networks, a type of machine learning that is modelled on the human brain. These neural networks are trained through data in a process similar to human learning. The data ‘trains’ the system through a process akin to ‘trial and error’. In this case, the network is trained with data generated by the computer simulation of an abstracted elephant trunk.

To get the abstracted trunk to curl around the circle and, ultimately, move the circle to another part of the screen, involves multiple steps and a lot of trial and error as the system gets trained.

“[The system] doesn’t know anything, so when it tightens up the fibres on one side or the other, it doesn’t know in advance how it’s going to move, but the neural network is going to figure out that if it does certain things to its muscle fibres, it’s going to move a certain way,” says Trinkle. “Over a long time horizon, the simulation will figure some things out. It’s going to try to end up pushing the disk in the right location.”

He likens the process to an infant learning to crawl. “If a baby is trying to learn how to crawl, it’s going to do some things that won’t work, and eventually the infant figures it out,” says Trinkle. “At some point, all of a sudden, the baby solves the problems and now it’s crawling because its neural network has been trained from its experience.”

In this research, computer simulation is the training ground for robot systems the teams will build.

Making robots

Trinkle and his students conduct experiments to extract “ground truth”, or directly observed information to feed into their computer programme. They are currently working with a next-generation lightweight robotic arm designed specifically for academic and industrial research.

A key feature of the arm, according to Jinda Cui, PhD student and research assistant in Trinkle’s lab, is that it has “seven degrees of freedom”, or seven independently controlled joints that allow for superior reachability in the three-dimensional workspace. “This robot has one redundant joint that can move without impacting the end-effector,” says Cui.

This is significant because it means, for example, that the robot’s gripping mechanism, or “hand”, can remain in the same pose even while the other “arm” joints are moving. This is especially useful when the environment is cluttered and the robot needs to make a lot of adjustments, explains Cui. Still, the more joints it has, the more challenging a robot is to control.

Another benefit of the advanced nature of the robot is that the team can access the low-level system that directly controls the joint movements.

“Through the internet or other communication protocols, we can communicate with the controller directly and tell the joints what to do,” says Cui. “We can modify the joint speed individually, or even how much current, or how much torque we want to give a particular joint.”

Trinkle and his team have also set up a motion capture camera system, similar to what is used to map the movement of actors in computer-generated imagery in film. Their system is designed to gather data about object positioning that would eventually inform their computer programme.

“If this robot is trying to manipulate something, like pick your phone up, the vision system should be able to track where your phone is and then the robot can adjust if the phone is not where it expected it to be,” says Trinkle.

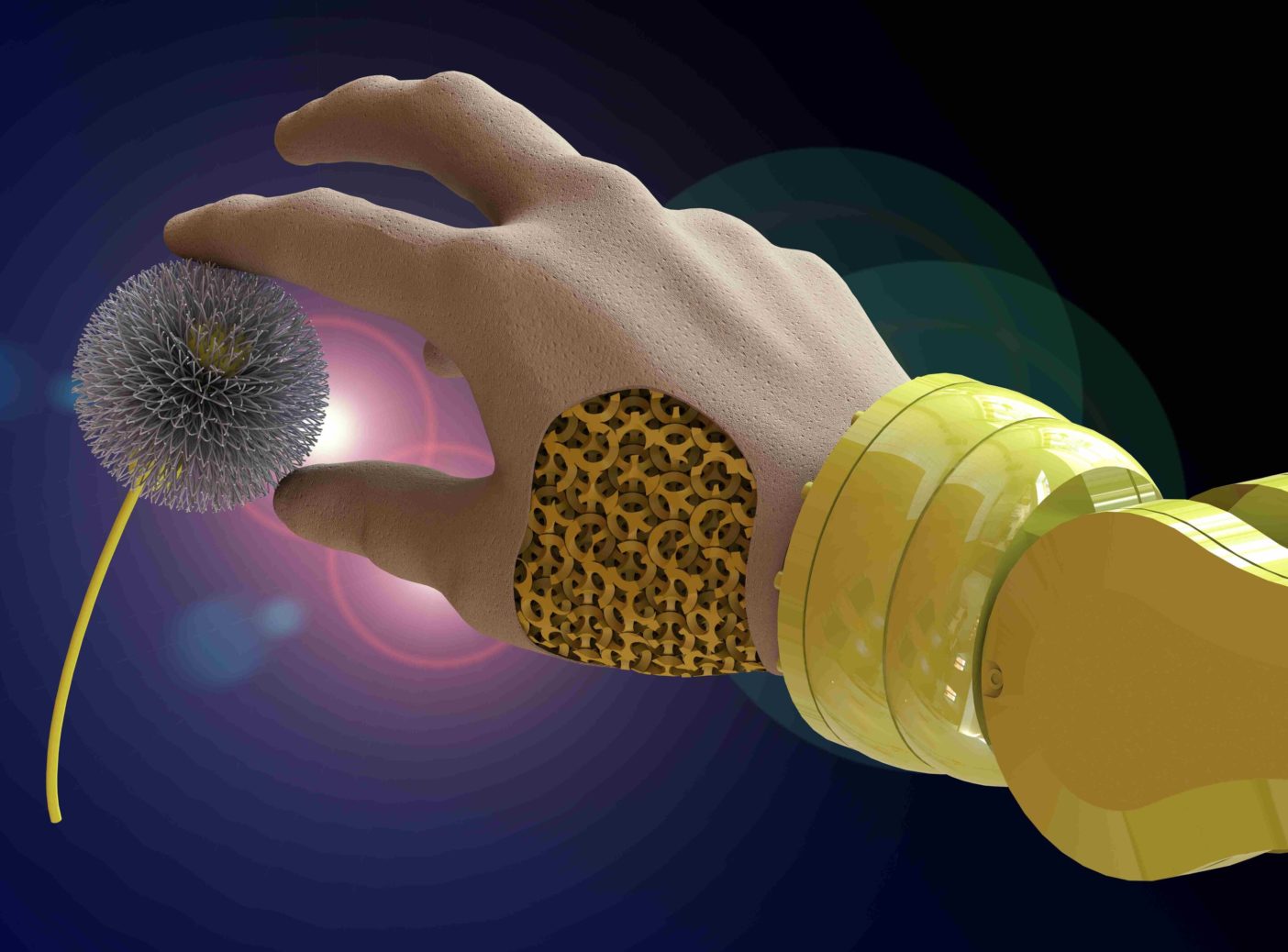

In the future, instead of an external motion capture camera system, robots will have built-in sensors that track object motion. The team’s robot comes with a three-dimensional camera already installed. For their experiments, Trinkle and Cui plan to add other detectors, such as tactile sensors which would be important for programming the robot to note changing contact conditions, like an object’s slipperiness, and then be able to adjust for it. “Our work,” says Cui, “is to make it more intelligent, more autonomous, more adaptive.”

Closing the reality gap

Trinkle notes that training a robot’s control system with simulations has its shortcomings. When attempted in three-dimensional space, there is often a “reality gap”. The artificial neural network, trained from simulation data, once applied to a physical robot may try to perform the same task as in the simulation and fail. He says that occurs because the model that was learned from the simulation data was biased toward the way the simulation works.

The challenge for robotics researchers like Trinkle is to develop a solid simulation and then do some testing in the physical world, knowing that some retraining will be required. Hopefully, says Trinkle, researchers are just making small adjustments so that it works in the physical world without having to start the whole process over.

In other words, in developing this new approach to teaching soft robots, Trinkle will try to train the “baby” 90% of the way to crawling in simulation, and then get it the rest of the way there by experimenting on a physical robot.

The team plans to build three full robot systems as testing prototypes, from modular motor units including a soft robotic hand that can grasp a wide range of object sizes and shapes; a trunk-like structure with a static base that can grasp and manipulate forward and backward, up and down and left and right; and a worm-like robot that can move freely over terrain with large obstacles. These robots will likely involve some sort of silicone skin to create a more continuous surface for contact.

As for robots someday being able to grasp, Trinkle notes: “It was a hot topic while I was in grad school and then it got cold for 15 years and then it got hot again. Perhaps because so many other problems were solved, and the remaining ones were just as hard as the grasping problem, so now there are a lot more people working on grasping again.

“And now that AI and neural networks have gotten so big, people are trying to apply those techniques in all sorts of different ways to grasping because it’s still the hard problem that it was 30 years ago.”

Though he believes there is a long way to go before scientists resolve the technical challenges of getting robots to grasp as well as humans or better, Trinkle acknowledges that those are not the only challenges that need to be overcome. “There are social issues such as: Will people want to be close to robots? Will they become friends?” he ponders.

There are ethical challenges to wrestle with as well. He points to autonomous cars as an example. In a difficult circumstance, he asks, should the car be programmed to save the driver and passengers, or save a passing pedestrian who might be impacted?

“There are so many different kinds of problems that people could study,” Trinkle adds. “When it comes to robots, who knows if society will be able to accept them and how far we will be able to advance the technology.”

This article originally appeared in the January 2021 issue of Robotics & Innovation Magazine